Implementing Scalability and Elasticity

In this chapter from AWS Certified SysOps Administrator - Associate (SOA-C02) Exam Cram, you will examine scaling, request offloading, and loose coupling as strategies that can enable an application to meet demand while maintaining cost-effectiveness.

Ensuring your application’s infrastructure is scalable and elastic delivers a double benefit for the application. First, by adding more resources dynamically when required, you can adapt to any amount of traffic to ensure you do not leave any request unanswered. Second, by removing resources, you ensure the application is cost-effective when little or no requests are being received. However, designing a scalable/elastic application is not always an easy task.

In this chapter, we examine scaling, request offloading, and loose coupling as strategies that can enable an application to meet demand while maintaining cost-effectiveness. Ensuring your application is scalable and elastic also builds a good underlying foundation to achieve high availability and resilience, which we discuss in Chapter 5, “High Availability and Resilience.”

Scaling in the Cloud

This section covers the following official AWS Certified SysOps Administrator - Associate (SOA-C02) exam domains:

Domain 2: Reliability and Business Continuity

Domain 3: Deployment, Provisioning, and Automation

For any application to be made elastic and scalable, you need to consider the sum of the configurations of its components. Any weakness in any layer or service that the application depends on can cause the application scalability and elasticity to be reduced. Any reduction in scalability and elasticity can potentially introduce weaknesses in the high availability and resilience of your application. At the end of the day, any poorly scalable, rigid application with no guarantee of availability can have a tangible impact on the bottom line of any business.

The following factors need to be taken into account when designing a scalable and elastic application:

Compute layer: The compute layer receives a request and responds to it. To make an application scalable and elastic, you need to consider how to scale the compute layer. Can you scale on a metric-defined amount of CPU, memory, and number of connections, or do you need to consider scaling at the smallest scale—per request? Also consider the best practice for keeping the compute layer disposable. There should never be any persistent data within the compute layer.

Persistent layer: Where is the data being generated by the application stored? Is the storage layer decoupled from the instances? Is the same (synchronous) data available to all instances, or do you need to account for asynchronous platforms and eventual consistency? You should always ensure that the persistent layer is designed to be scalable and elastic and take into account any issues potentially caused by the replication configuration.

Decoupled components: Are you scaling the whole application as one, or is each component or layer of the application able to scale independently? You need to always ensure each layer or section of the application can scale separately to achieve maximum operational excellence and lowest cost.

Asynchronous requests: Does the compute platform need to process every request as soon as possible within the same session, or can you schedule the request to process it at a later time? When requests are allowed to process for a longer amount of time (many seconds, perhaps even minutes or hours), you should always decouple the application with a queue service to handle any requests asynchronously—meaning at a later time. Using a queue can enable you to buffer the requests, ensuring you receive all the requests on the incoming portion of the application and handle the processing with predictable performance on the back end. A well-designed, asynchronously decoupled application should almost never respond with a 500-type HTTP (service issue) error.

Assessing your application from these points of view should give you a rough idea of the scalability and elasticity of the platform. When you have a good idea of the scalability/elasticity, also consider any specific metrics within the defined service-layer agreement (SLA) of the application. After both are defined, assess whether the application will meet the SLA in its current configuration. Make a note if you need to take action to improve the scalability/elasticity and continuously reassess because both the application requirements and defined SLA of the application are likely to change over time.

After the application has been designed to meet the defined SLA of the application, you can make use of the cloud metrics provided in the platform at no additional cost to implement automated scaling and meet the demand in several different ways. We discuss how to implement automation in the AWS Autoscaling later in this section.

Horizontal vs. Vertical Scaling

The general consensus is that there are only two ways (with minor variance, depending on the service or platform) to scale a service or an application:

Vertically, by adding more power (more CPU, memory, disk space, network bandwidth) to an application instance

Horizontally, by adding more instances to an application layer, thus increasing the power by a factor of the size of the instance added

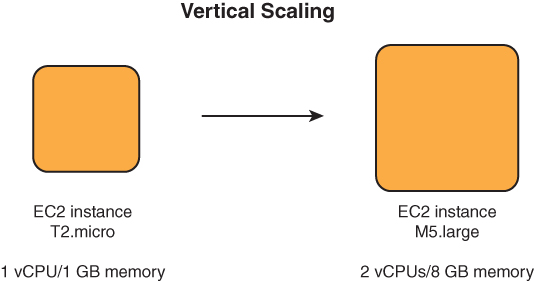

A great benefit to vertical scaling is that it can be deployed in any circumstance, even without any application support. Because you are maintaining one unit and increasing its size, you can vertically scale to the maximum size of the unit in question. The maximum scaling size of an instance is defined by the maximum size that the service supports. For example, at the time of writing, the maximum instance size supported on EC2 (u-24tb1.metal) offers 448 CPU cores and 24 TB (that’s 24,576 GB!) of memory, while the smallest still-supported size (t2.nano) has only 1 CPU core and 0.5 GB of memory. Additionally, there are plenty of instance types and sizes to choose from, which means that you can horizontally scale an application the exact size you need at a certain moment in time. This same fact applies to other instance-based services such as EMR, RDS, and DocumentDB. Figure 4.1 illustrates vertical scaling of instances.

FIGURE 4.1 Vertical scaling

However, when thinking about scalability, you have to consider the drawbacks of vertical scaling. We mentioned maximum size, but one other major drawback is what makes horizontal scaling impractical—a single instance. Because all of AWS essentially operates on EC2 as the underlying design, you can take EC2 as a great example of why a single instance is not the way to go. Each instance you deploy is deployed on a hypervisor in a rack in a datacenter, and this datacenter can only ever be part of one availability zone. In Chapter 1, “Introduction to AWS,” we defined an availability zone as a fault isolation environment—meaning any failure, whether it is due to a power or network outage or even an earthquake or flood, is always isolated to one availability zone. Although a single instance is vertically scalable, it is by no means highly available, nor does the vertical scaling make the application very elastic, because every time you scale to a different instance type, you need to reboot the instance.

This is where horizontal scaling steps in. With horizontal scaling, you add more instances (scale-out) when traffic to your application increases and remove instances (scale-in) when traffic to your application is reduced. You still need to select the appropriate scaling step, which is, of course, defined by the instance size. When selecting the size of the instances in a scaling environment, always ensure that they can do the job and don’t waste resources. Figure 4.2 illustrates horizontal scaling.

FIGURE 4.2 Horizontal scaling

In the ideal case, the application is stateless, meaning it does not store any data in the compute layer. In this case, you can easily scale the number of instances instead of scaling one instance up or down. The major benefit is that you can scale across multiple availability zones, thus inherently achieving high availability as well as elasticity. This is why the best practice on AWS is to create stateless, disposable instances and decouple the processing from the data and the layers of the application from each other. However, a potential drawback of horizontal scaling is when the application does not support it. The reality is that you will sooner or later come upon a case where you need to support an “enterprise” application, being migrated from some virtualized infrastructure, that simply does not support adding multiple instances to the mix. In this case you can still use the services within AWS to make the application highly available and recover it automatically in case of a failure. For such instances you can now utilize the AWS EC2 Auto Recovery service, for the instance types that support it, which automatically re-creates the instance in case of an underlying system impairment or failure.

Another potential issue that can prevent horizontal scalability is the requirement to store some data in a stateful manner. Thus far, we have said that you need to decouple the state of the application from the compute layer and store it in a back-end service—for example, a database or in-memory service. However, the scalability is ultimately limited by your ability to scale those back-end services that store the data. In the case of the Relational Database Service (RDS), you are always limited to one primary instance that handles all writes because both the data and metadata within a traditional database need to be consistent at all times. You can scale the primary instance vertically; however, there is a maximum limit the database service will support, as Figure 4.3 illustrates.

FIGURE 4.3 Database bottlenecking the application

You can also create a Multi-AZ deployment, which creates a secondary, synchronous replica of the primary database in another availability zone; however, Multi-AZ does not make the application more scalable because the replica is inaccessible to any SQL operations and is provided for the sole purpose of high availability. Another option is adding read replicas to the primary instance to offload read requests. We delve into more details on read replicas later in this chapter and discuss database high availability in Chapter 5.

AWS Autoscaling

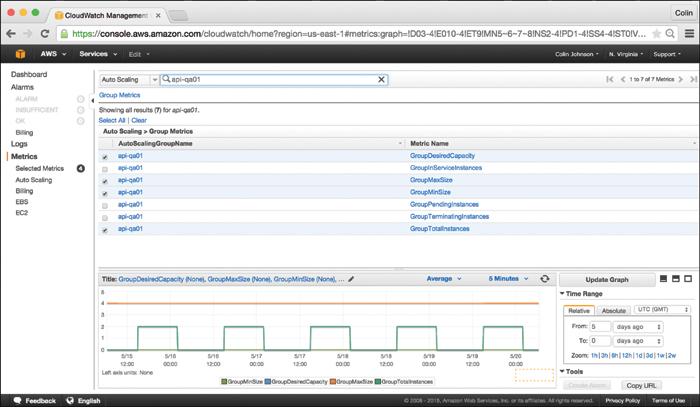

After you design all the instance layers to be scalable, you should take advantage of the AWS Autoscaling service to automate the scale-in and scale-out operations for your application layers based on performance metrics—for example, EC2 CPU usage, network capacities, and other metrics captured in the CloudWatch service.

The AutoScaling service can scale the following AWS services:

EC2: Add or remove instances from an EC2 AutoScaling group.

EC2 Spot Fleets: Add or remove instances from a Spot Fleet request.

ECS: Increase or decrease the number of containers in an ECS service.

DynamoDB: Increase or decrease the provisioned read and write capacity.

RDS Aurora: Add or remove Aurora read replicas from an Aurora DB cluster.

To create an autoscaling configuration on EC2, you need the following:

EC2 Launch template: Specifies the instance type, AMI, key pair, block device mapping, and other features the instance should be created with.

Scaling policy: Defines a trigger that specifies a metric ceiling (for scaling out) and floor (for scaling in). Any breach of the floor or ceiling for a certain period of time triggers autoscaling.

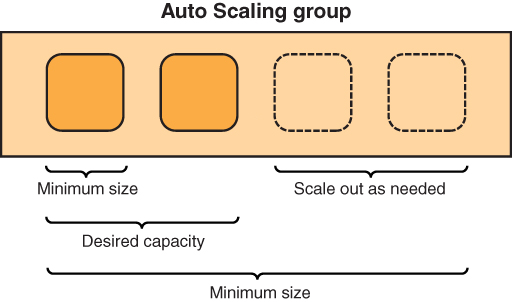

EC2 AutoScaling group: Defines scaling limits and the minimum, maximum, and desired numbers of instances. You need to provide a launch configuration and a scaling policy to apply during a scaling event.

Dynamic Scaling

Traditionally, scaling policies have been designed with dynamic scaling in mind. For example, a common setup would include

An AutoScaling group with a minimum of 1 and a maximum of 10 instances

A CPU % ceiling of 70 percent for scale-out

A CPU % floor of 30 percent for scale-in

A breach duration of 10 minutes

A scaling definition of +/− 33 percent capacity on each scaling event

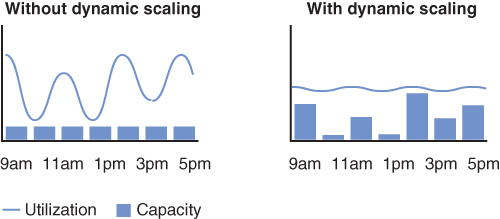

The application is now designed to operate at a particular scale between 30 and 70 percent aggregate CPU usage of the AutoScaling group. After the ceiling is breached for 10 minutes, the Autoscaling service adds a third more instances to the AutoScaling group. If you are running one instance, it adds another because it needs to meet 33 percent or more of the capacity. If you are running two instances, it also adds one more; however, at three instances, it needs to add two more instances to meet the rules set out in the scaling policy. When the application aggregate CPU usage falls below 30 percent for 10 minutes, the AutoScaling group is reduced by 33 percent, and the appropriate number of instances is removed each time the floor threshold is breached. Figure 4.4 illustrates dynamic scaling.

FIGURE 4.4 Dynamic scaling

Manual and Scheduled Scaling

The AutoScaling configuration also has a desired instance count. This feature enables you to scale manually and override the configuration as per the scaling policy. You can set the desired count to any size at any time and resize the AutoScaling group accordingly. This capability is useful if you have knowledge of an upcoming event that will result in an increase of traffic to your site. You can prepare your environment to meet the demand in a much better way because you can increase the AutoScaling group preemptively in anticipation of the traffic.

You can also set up a schedule to scale if you have a very predictable application. Perhaps it is a service being used only from 9 a.m. to 5 p.m. each day. You simply set the scale-out to happen at 8 a.m. in anticipation of the application being used and then set a scale-in scheduled action at 6 p.m. after the application is not being used anymore. This way you can easily reduce the cost of operating intermittently used applications by over 50 percent. Figure 4.5 illustrates scheduled scaling.

FIGURE 4.5 Scheduled scaling

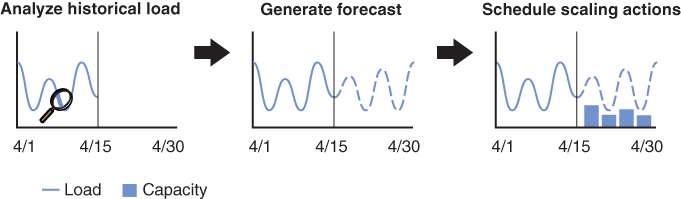

Predictive Scaling

Another AutoScaling feature is predictive scaling, which uses machine learning to learn the scaling pattern of your application based on the minimum amount of historical data. The machine learning component then predicts the scaling after reviewing CW data from the previous 14 days to account for daily and weekly spikes as it learns the patterns on a longer time scale. Figure 4.6 illustrates predictive scaling.

FIGURE 4.6 Predictive scaling

A. Synchronous request handling in the compute layer

A. Synchronous request handling in the compute layer