Handling Nonrelational Data in AWS

As you saw with the different data models discussed earlier in this chapter, not all data fits well into a traditional relational database. Some cases are more suitable for a NoSQL back end than a standard SQL back end—such as where data requires a flexible schema, where data is being collected temporarily, where data consistency is not as important as availability, and where consistent, low-latency write performance is crucial. AWS offers several different solutions for storing NoSQL data, including the following:

DynamoDB: A NoSQL key/value storage back end that is addressable via HTTP/HTTPS

ElastiCache: An in-memory NoSQL storage back end

DocumentDB: A NoSQL document storage back end

Neptune: A NoSQL graphing solution for storing and addressing complex networked datasets

Redshift: A columnar data warehousing solution that can scale to 2 PB per volume

Redshift Spectrum: A serverless data warehousing solution that can address data sitting on S3

TimeStream: A time series recording solution for use with IoT and industrial telemetry

Quantum Ledger: A ledger database designed for record streams, banking transactions, and so on

As you can see, you are simply spoiled for choice when it comes to storing nonrelational data types in AWS. This chapter focuses on the first two database types, DynamoDB and ElastiCache, as they are important both for gaining a better understanding of the AWS environment and for the AWS Certified Developer–Associate exam.

Amazon DynamoDB

DynamoDB is a serverless NoSQL solution that uses a standard REST API model for both the management functions and data access operations. The DynamoDB back end is designed to store key/value data accessible via a simple HTTP access model. DynamoDB supports storing any amount of data and is able to predictably perform even under extreme read and write scales of 10,000 to 100,000 requests per second from a single table at single-digit millisecond latency scales. When reading data, DynamoDB has support for eventually consistent, strongly consistent, and transactional requests. Each request can be augmented with the JMESPath query language, which gives you the ability to sort and filter the data both on the client side and on the server side.

A DynamoDB table has three main components:

Tables, which store items

Items, which have attributes

Attributes, which are the key/value pairs containing data

Tables

Like many other NoSQL databases, DynamoDB has a distributed back end that enables it to linearly scale in performance and provide your application with the required level of performance. The distribution of the data across the DynamoDB cluster is left up to the user. When creating a table, you are asked to select a primary key (also called a hash key). The primary key is used to create hashes that allow the data to be distributed and replicated across the back end according to the hash. To get the most performance out of DynamoDB, you should choose a primary key that has a lot of variety. A primary key is also indexed so that the attributes being stored under a certain key are accessible very quickly (without the need for a scan of the table).

For example, imagine that you are in charge of a company that makes online games. A table is used to record all scores from all users across a hundred or so games, each with its own unique identifiers. Your company has millions of users, each with a unique username. To select a primary key, you have a choice of either game ID or username. There are more unique usernames than game IDs, so the best choice would be to select the username as the primary key as the high level of variety in usernames will ensure that the data is distributed evenly across the back end.

Optionally, you can also add a sort key to each table to add an additional index that you can use in your query to sort the data within a table. Depending on the type of data, the sorting can be temporal (for example, when the sort key is a date stamp), by size (when the sort key is a value of a certain metric), or by any other arbitrary string.

A table is essentially just a collection of items that are grouped together for a purpose. A table is regionally bound and is highly available within the region as the DynamoDB back end is distributed across all availability zones in a region. Because a table is regionally bound, the table name must be unique within the region within your account.

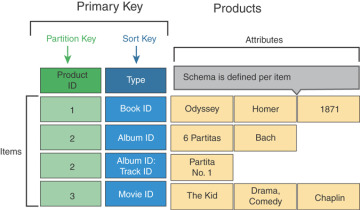

Figure 4-9 illustrates the structure of a DynamoDB table.

Figure 4-9 Structure of a DynamoDB Table

DynamoDB also has support for reading streams of changes to each table. By enabling DynamoDB Streams on a table, you can point an application to the table and then continuously monitor the table for any changes. As soon as a change occurs, the stream is populated, and you are able to read the old value, the new value, and both the new and old values. This means you can use DynamoDB as a front end for your real-time processing environment and also integrate DynamoDB with any kind of security systems, monitoring systems, Lambda functions, and other intelligent components that can perform actions triggered by a change in DynamoDB.

When creating a table, you need to specify the performance mode and choose either provisioned capacity or on-demand mode. Provisioned capacity is better when there is a predictable load expected on the table, and on-demand mode can be useful for any kind of unknown loads on the table. With provisioned capacity, you can simply select AutoScaling for the capacity, which can increase or decrease the provisioned capacity when the load increases or decreases.

Encryption is also available in DynamoDB at creation; you can select whether to integrate encryption with the KMS service or with a customer-provided key.

Items

An item in a table contains all the attributes for a certain primary key or the primary key and sort key if the sort key has been selected on the table. Each item can be up to 400 KB in size and is designed to hold key/value data with any type of payload. The items are accessed via a standard HTTP model where PUT, GET, UPDATE, and DELETE operations allows you to perform create, read, update, and delete (CRUD) operations. Items can also be retrieved in batches, and a batch operation is issued as a single HTTP method call that can retrieve up to 100 items or write up to 25 items with a collective size not exceeding 16 MB.

Attributes

An attribute is a payload of data with a distinct key. An attribute can have one of the following values:

A single value that is either a string, a number, a Boolean, a null, or a list of values

A document containing multiple nested key/value attributes up to 32 levels deep

A set of multiple scalar values

Scalar Type Key/Value Pairs

For each attribute, a single value or a list of arbitrary values exists. In this example, the list has a combination of number, string, and Boolean values:

{

"name" : Anthony,

"height" : "6.2",

"results" : ["2.9", "ab", "false"]

}

These attributes would be represented in a DynamoDB table as illustrated in Table 4-3.

Table 4-3 Scalar Types

name |

height |

results |

Anthony |

6.2 |

2.9, ab, false |

Document Type: A Map Attribute

The document attribute contains nested key/value pairs, as shown in this example:

{

"date_of_bith" : [ "year" : 1979, "month" : 09, "day" : 23]

}

This attribute would be represented in a DynamoDB table as illustrated in Table 4-4.

Table 4-4 Table with an Embedded Document

name |

height |

results |

date_of_birth |

Anthony |

6.2 |

5, 2.9, ab, false |

year:1979 |

month:09 |

|||

day:23 |

Set Type: A Set of Strings

The set attribute contains a set of values of the same type—in this example, string:

{

"activities" :

["running", "hiking", "swimming" ]

}

This attribute would be represented in a DynamoDB table as shown in Table 4-5.

Table 4-5 Table with an Embedded Document and Set

name |

height |

results |

date_of_birth |

activities |

Anthony |

6.2 |

5, 2.9, ab, false |

year:1979 |

running, hiking, swimming |

month:09 |

||||

day:23 |

Secondary Indexes

Sometimes the combination of primary key and sort key does not give you enough of an index to efficiently search through data. You can add two more indexes to each table by defining the following:

Local secondary index (LSI): The LSI can be considered an additional sort key for sifting through multiple entries of a certain primary key. This is very useful in applications where two ranges (the sort key and the secondary index) are required to retrieve the correct dataset. The LSI consumes some of the provisioned capacity of the table and can thus impact your performance calculations in case it is created.

Global secondary index (GSI): The GSI can be considered an additional primary key on which the data can be accessed. The GSI allows you to pivot a table and access the data through the key defined in the GSI and get a different view of the data. The GSI has its own provisioned read and write capacity units that can be set completely independently of the capacity units provisioned for the table.

Planning for DynamoDB Capacity

Careful capacity planning should be done whenever using provisioned capacity units to avoid either overprovisioning or underprovisioning your DynamoDB capacity. Although AutoScaling is essentially enabled by default on any newly created DynamoDB table, you should still calculate the required read and write capacity according to your projected throughput and design the AutoScaling with the appropriate limits of minimum and maximum capacity in mind. You also have the ability to disable AutoScaling and set a certain capacity to match any kind of requirements set out by your SLA.

When calculating capacities, you need to set both the read capacity units (RCUs) and write capacity units (WCUs):

One RCU represents one strongly consistent 4 KB or two eventually consistent 4 KB reads.

One WCU represents one write request of up to 1 KB in size.

For example, say that you have industrial sensors that continuously feed data at a rate of 10 MB per second, with each write being approximately 500 bytes in size. Because the write capacity units represent a write of up to 1 KB in size, each 500-byte write will consume 1 unit, meaning you will need to provision 20,000 WCUs to allow enough performance for all the writes to be captured.

As another example, say you have 50 KB feeds from a clickstream being sent to DynamoDB at the same 10 MB per second. Each write will now consume 50 WCUs, and at 10 MB per second, you are getting 200 concurrent writes, which means 10,000 WCUs will be sufficient to capture all the writes.

With reads, the calculation is dependent on whether you are reading with strong or eventual consistency because the eventually consistent reads can perform double the work per capacity unit. For example, an the application is reading at a consistent rate of 10 MB per second and performing strongly consistent reads of items 50 KB in size, each read consumes 13 RCUs of 4 KB, whereas eventually consistent reads consume only 7 RCUs. To read the 10 MB per second in a strongly consistent manner, you would need an aggregate of 2600 RCUs, whereas eventually consistent reads would require you to only provision 1400 RCUs.

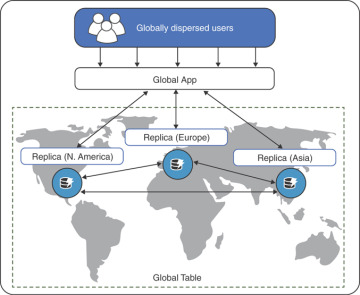

Global Tables

In DynamoDB, you also have the ability to create a DynamoDB global table (see Figure 4-10). This is a way to share data in a multi-master replication approach across tables in different regions. To create a global table, you need to first create tables in each of the regions and then connect them together in the AWS console or by issuing a command in the AWS CLI to create a global table from the previously created regional tables. Once a global table is established, each of the tables subscribes to the DynamoDB stream of each other table in the global table configuration. This means that a write to one of the tables will be instantly replicated across to the other region. The latency involved in this operation will essentially be almost equal to the latency of the sheer packet transit across one region to another.

Figure 4-10 DynamoDB Global Tables

Accessing DynamoDB Through the CLI

It is possible to interact with a DynamoDB table through the CLI. Using the CLI is an effective way to show how each and every action being performed is simply an API call. The CLI has abstracted shorthand commands, but you can also use direct API calls with the JSON attributes.

In this example, you will be creating a DynamoDB table to create a table called vegetables and define some attributes in the table. To create the table, you use the aws dynamodb create-table command, where you need to define the following:

--table name: The name of the table

--attribute-definitions: The attributes with the name of your attribute (AttributeName) and the type of value (AttributeType: S = string, N = number, or B = binary)

--key-schema: The primary and sort key to use

KeyType: The primary key (HASH), and the sort key (RANGE)

--provisioned-throughput: The RCUs and WCU

The command should look like so:

aws dynamodb create-table \ --table-name vegetables \ --attribute-definitions \ AttributeName=name,AttributeType=S AttributeName=type, AttributeType=S \ --key-schema \ AttributeName=name,KeyType=HASH AttributeName=type,KeyType=RANGE \ --provisioned-throughput ReadCapacityUnits=5,WriteCapacityUnits=10

After the table is created, you can use the aws dynamodb put-item command to write items to the table:

--table-name: The table you want to write into

--item: The item, as a key/value pair

"key": {"data type":"value"}: The name, type, and value of the data

The command should look something like this:

aws dynamodb put-item --table-name vegetables \

--item '{ "name": {"S": "potato"}, "type": {"S": "tuber"}, "cost":

{"N": "1.5"} }'

If you are following along with the instructions, you can create some more entries in the table with the put-item command.

When doing test runs, you can also add the --return-consumed-capacity TOTAL switch at the end of your command to get the number of capacity units the command consumed in the API response.

Next, to retrieve the data from the DynamoDB table for your user with the primary key potato and sort key tuber, you issue the following command:

aws dynamodb get-item --table-name users \

--key '{ "name": {"S": "potato"}, "type": {"S": "tuber"} }

The response from the query should look something like the output in Example 4-9.

Example 4-9 Response from the aws dynamodb get-item Query

HTTP/1.1 200 OK

x-amzn-RequestId: <RequestId>

x-amz-crc32: <Checksum>

Content-Type: application/x-amz-json-1.0

Content-Length: <PayloadSizeBytes>

Date: <Date>

{

"Item": {

"name": { "S": ["potato"] },

"type": { "S": ["tuber"] },

"cost": { "N": ["1.5"] },

}

}

User Authentication and Access Control

Because DynamoDB provides a single API to control both the management and data access operations, you can simply define two different policies to allow for the following:

Administrative access with permissions to create and modify DynamoDB tables

Data access with specific permissions to read, write, update, or delete items in specific tables

You can also write your application to perform both the administrative and data access tasks. This gives you the ability to easily self-provision the table from the application. This is especially useful for any kind of data where the time value is very sensitive, also it is useful for any kind of temporary data, such as sessions or shopping carts in an e-commerce website or an internal report table that can give management a monthly revenue overview.

You can provision as many tables as needed. If any tables are not in use, you can simply delete them. When data availability is required, you can reduce the RCU and WCU provisioning to 5 units (the lowest possible setting). This way, a reporting engine can still access historical data, and the cost of keeping the table running is minimal.

For a sales application that records sales metrics each month, the application could be trusted to create a new table every month with the production capacity units but maintain the old tables for analytics. Every month, the application would reduce the previous monthly table's capacity units to whatever would be required for analytics to run.

Because policies give you the ability to granularly control permissions, you can lock down the application to only one particular table or a set of values within the table by simply adding a condition on the policy or by using a combination of allow and deny rules.

The policy in Example 4-10 locks down the application to exactly one table by denying access to everything that is not this table and allowing access to this table. This way, you can ensure that any kind of misconfiguration will not allow the application to read or write to any other table in DynamoDB.

Example 4-10 IAM Policy Locking Down Permissions to the Exact DynamoDB Table

{

"Version": "2012-10-17",

"Statement":[{

"Effect":"Allow",

"Action":["dynamodb:*"],

"Resource":["arn:aws:dynamodb:us-east-1:111222333444:table/vegetables"]

},

{

"Effect":"Deny",

"Action":["dynamodb:*"],

"NotResource":["arn:aws:dynamodb:us-east- 1:111222333444:table/ vegetables "]

},

]

}