Storing Static Assets in AWS

To identify static assets, you can simply scan the file system of your application and look at all the files that have not been changed since they were created. If the creation time matches the last time the file was modified, you can be certain the asset is a static piece of data. In addition, any files being delivered via an HTTP, FTP, SCP, or other type of service can fall into the category of static assets because these files are most likely not being consumed on the server but are rather being consumed by a client connecting to the server through one of these protocols. Once you have identified your static assets, you need to choose the right type of data store. It needs to be able to scale with your data and needs to do that in an efficient, cost-effective way. In AWS, Simple Storage Service (S3) is used to store any kind of data blobs on an object storage back end with unlimited capacity.

Amazon S3

Amazon S3 is essentially a serverless storage back end that is accessible via HTTP/HTTPS. The service is fully managed and has a 99.99% high availability SLA per region and a 99.999999999% SLA for durability of data. The 99.99% high availability means you can expect to have less than 45 minutes of service outage per region during a monthly billing cycle, and the 99.999999999% durability means the probability of losing a file is equal to 1 in 10,000,000 per every 10,000 years.

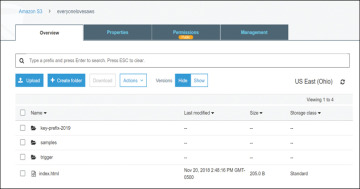

S3 delivers all content through the use of content containers called buckets. Each bucket serves as a unique endpoint where files and objects can be aggregated (see Figure 4-1). Each file you upload to S3 is called a key; this is the unique identifier of the file within the S3 bucket. A key can be composed of the filename and prefixes. Prefixes can be used to structure the files even further and to provide a directory-like view of the files, as S3 has no concept of directories.

Figure 4-1 The Key Prefixes Representing a Directory Structure in S3

With S3 you can control access permissions at the bucket level, and thus you can define the level of access to the bucket itself. You can essentially make a bucket completely public by allowing anonymous access, or you can strictly control the access to each key in the bucket. There are two different ways of allowing access to an S3 bucket:

Use an access control list (ACL) or a bucket ACL: With the ACL approach, you can control the permissions on a broader spectrum than by using the bucket policy. This approach is designed to quickly allow access to a large group of users, such as another account or everyone with a specific type of access to all the keys in the bucket; for example, an ACL can easily be used to define that everyone can list the contents of the bucket.

Use a bucket policy: A bucket policy is a JSON-formatted document that is structured exactly like an IAM policy. A bucket policy can be used to granularly control access to a bucket and its contents. For example, by using a bucket policy, you can allow a specific user to access only a specific key within a bucket.

Delivering Content from S3

The S3 service is very easy to use when developing applications because it is addressable via standard HTTP method calls. And because the service delivers files through a standard HTTP web interface, it is well suited for storing any kind of static website content, sharing files, hosting package repositories, and even hosting a static website that can have extended app-like functionality with client-side scripting. Developers are also able to use the built-in change notification system in S3 to send messages about file changes and allow for processing with other AWS services, such as AWS Lambda, which can pick up any file coming onto S3 and perform transformations, record metadata, and so on so that the static website functionality can be greatly enhanced. Figure 4-2 illustrates how a file being stored on S3 can trigger a dynamic action on AWS Lambda.

Figure 4-2 S3 Events Triggering the Lambda Service

Because S3 is basically an API that you can communicate with, you can simply consider it programmatically accessible storage. By integrating your application with S3 API calls, you can greatly enhance the capability of ingesting data and enhancing raw storage services with different application-level capabilities. S3’s developer-friendly features have made it the gold standard for object storage and content delivery.

Working with S3 in the AWS CLI

To create a bucket, you can simply use the aws s3api create-bucket command:

aws s3api create-bucket --bucket bucket-name --region region-id

Say that you want to create a bucket called everyonelovesaws. If you are following along with this book, you will have to select a different name because AWS bucket names are global, and the everyonelovesaws bucket already exists for the purpose of demonstrating FQDNs. To create the bucket, simply replace bucket-name and set your desired region:

aws s3api create-bucket --bucket everyonelovesaws --region us-east-2

After the bucket is created, you can upload an object to it. You can upload any arbitrary file, but in this example, you can upload the index file that will later be used for the static website:

aws s3 cp index.html s3://everyonelovesaws/

This simply uploads this one file to the root of the bucket. To do a bit more magic, you can choose to upload a complete directory, such as your website directory:

aws s3 cp /my-website/ s3://everyonelovesaws/ --recursive

You might also decide to include only certain files by using the --exclude and --include switches. For example, when you update your website HTML, you might want to update all the HTML files but omit any other files, such as images, videos, CSS, and so on. You might need to use multiple commands and search for all the HTML files. To do all this with one command, you can simply run the following:

aws s3 cp /my-website/ s3://everyonelovesaws/ --recursive --exclude "*" --include "*.html"

By excluding everything (*) and including only *.html, you ensure that all HTML files get uploaded while all the content that hasn’t changed is not touched.

When accessing content within a bucket on S3, there are three different URLs that you can use. The first (default) URL is structured as follows:

http{s}://s3.{region-id}.amazonaws.com/{bucket-name}/{optional key

prefix}/{key-name}

As you can see, the default naming schema makes it easy to understand: First you see the region the bucket resides in (from the region ID in the URL). Then you see the structure defined in the bucket/key-prefix/key combination.

Here are some examples of files in S3 buckets:

A file in the root of the everyonelovesaws bucket: https://s3.us-east-2.amazonaws.com/everyonelovesaws/index.html

A file with the key prefix key-prefix-2019/ in the everyonelovesaws bucket: https://s3.us-east-2.amazonaws.com/everyonelovesaws/key-prefix-2019/index.html

A file with the key prefix key-prefix-2019/08/20 in the everyonelovesaws bucket: https://s3.us-east-2.amazonaws.com/everyonelovesaws/key-prefix-2019/08/20/index.html

However, the default format might not be the most desirable, especially if you want to represent the S3 data as being part of your website. For example, suppose you want to host all your images on your S3 website, and you would like to redirect the subdomain images.mywebsite.com to an S3 bucket. The first thing to do would be to create a bucket with that exact name images.mywebsite.com in it so you can create a CNAME in your domain and not break the S3 request.

To create a CNAME, you can use the second type of FQDN in your URL that is provided for each bucket, with the following format:

{bucket-name}.s3.{optional region-id}.amazonaws.com

As you can see, the regional ID is optional, and the bucket name is a subdomain of s3.amazonaws.com, so it is easy to create a CNAME in your DNS service to redirect a subdomain to the S3 bucket. For the image redirection, based on the preceding syntax, you would simply create a record like this:

images.mywebsite.com CNAME images.mywebsite.com. s3.amazonaws.com.

If you want to disclose the region ID, you can optionally create an entry with the region ID in the target name.

Here are some working examples of a bucket called images.markocloud.com that is a subdomain of the markocloud.com domain:

FQDN with the region ID is serving the index.html key this way: http://images.markocloud.com.s3.us-east-1.amazonaws.com/index.html

FQDN without the region ID is serving the index.html key this way: http://images.markocloud.com.s3.amazonaws.com/index.html

FQDN with the CNAME on the markocloud.com domain is serving the index.html key this way: http://images.markocloud.com/index.html

Hosting a Static Website

S3 is a file delivery service that works on HTTP/HTTPS. To host a static website, you simply need to make the bucket public by providing a bucket policy and enabling the static website hosting option. Of course, you also need to upload all your static website files, including an index file.

To make a bucket serve a static website from the AWS CLI, you need to run the aws s3 website command using the following syntax:

aws s3 website s3://{bucket-name}/ --index-document {index-

document-key} --error-document {optional-error-document-key}

To make the everyonelovesaws bucket into a static website, for example, you would simply enter the following:

aws s3 website s3://everyonelovesaws/ --index-document index.html

You now also need to apply a bucket policy to make the website accessible from the outside world. If you are creating your own static website, you need to replace everyonelovesaws in the resource ARN ("Resource": "arn:aws:s3:::everyonelovesaws/*-) with your bucket name, as demonstrated in Example 4-1.

Example 4-1 An IAM Statement That Allows Read Access to All Items in a Specific S3 Bucket

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "PublicReadGetObject",

"Effect": "Allow",

"Principal": "*",

"Action": "s3:GetObject",

"Resource": "arn:aws:s3:::everyonelovesaws/*"

}

]

}

As you can see, the policy is allowing access from anywhere and performing the s3:GetObject function, which means it is allowing everyone to read the content but not allowing the listing or reading of the file metadata.

You can save this bucket policy as eveyonelovesaws.json and apply it to the bucket with the following command:

aws s3api put-bucket-policy --bucket everyonelovesaws --policy file:// eveyonelovesaws.json

When the static website is enabled, you are provided with a URL that looks like this:

Note in this example as well as in the example of the CNAMED images bucket, that the HTTP URL is not secure. This is due to the fact that there is a limitation to bucket names containing dots when using HTTPS. The default S3 certificate used for signing is *.s3.amazonaws.com. This certificate can only sign the first subdomain of .s3.amazonaws.com. Any dot in the name will be represented as a further subdomain, which would break the certificate chain. Therefore, going to the following site will show an insecure warning:

This is due to the fact that the *.s3.amazonaws.com certificate only signs the “com.s3.amazonaws.com” portion of the domain name and going to the following site will now show an insecure warning since the * certificate does not sign the DNS name for the “images.markocloud.” part of the domain:

For hosted websites, you can, of course, have dots in the name of the bucket. However, if you tried to add an HTTPS CloudFront distribution and point it to such a bucket, you would break the certificate functionality by introducing a domain-like structure to the name. Nonetheless, all static websites on S3 would still be available on HTTP directly even if there were dots in the name. The final part of this chapter discusses securing a static website through HTTPS with a free certificate attached to a CloudFront distribution.

Versioning

S3 provides the ability to create a new version of an object if it is uploaded more than once. For each key, a separate entry is created, and a separate copy of the file exists on S3. This means you can always access each version of the file and also prevent the file from being deleted because a deletion will only mark the file as deleted and will retain the specific previous versions.

To enable versioning on your bucket, you can use the following command:

aws s3api put-bucket-versioning --bucket everyonelovesaws --versioning-configuration Status=Enabled

There are three status options: Disabled, Enabled, and Suspended. By default, a bucket has versioning disabled, but once it is enabled, it cannot be removed but only suspended. When versioning is suspended, new versions of the document are not created; rather, the newest version is overwritten, and the older versions are retained.

S3 Storage Tiers

When creating an object in a bucket, you can also select the storage class to which the object will belong. This can also be done automatically through data life cycling. S3 has six storage classes:

S3 Standard: General-purpose online storage with 99.99% availability and 99.999999999% durability (that is, “11 9s”).

S3 Infrequent Access: Same performance as S3 Standard but up to 40% cheaper with 99.9% availability SLA and the same “11 9s” durability.

S3 One Zone-Infrequent Access: A cheaper data tier in only one availability zone that can deliver an additional 25% savings over S3 Infrequent Access. It has the same durability, with 99.5% availability.

S3 Reduced Redundancy Storage (RRS): Previously this was a cheaper version of S3 providing 99.99% durability and 99.99% availability of objects. RRS cannot be used in a life cycling policy and is now more expensive than S3 Standard.

S3 Glacier: Less than one-fifth the price of S3 Standard, designed for archiving and long-term storage.

S3 Glacier Deep Archive: Costs four times less than Glacier and is the cheapest storage solution, at about $1 per terabyte per month. This solution is intended for very long-term storage.

Data Life Cycling

S3 supports automatic life cycling and expiration of objects in an S3 bucket. You can create rules to life cycle objects older than a certain time into cheaper storage. For example, you can set up a policy that will store any object older than 30 days on S3 Infrequent Access (S3 IA). You can add additional stages to move the object from S3 IA to S3 One Zone IA after 90 days and then push it out to Glacier after a year, when the object is no longer required to be online. Figure 4-3 illustrates S3 life cycling.

Figure 4-3 Illustration of an S3 Life Cycling Policy

S3 Security

When storing data in the S3 service, you need to consider the security of the data. First, you need to ensure proper access control to the buckets themselves. There are three ways to grant access to an S3 bucket:

IAM policy: You can attach IAM policies to users, groups, or roles to allow granular control over different levels of access (such as types of S3 API actions, like GET, PUT, or LIST) for one or more S3 buckets.

Bucket policy: Attached to the bucket itself as an inline policy, a bucket policy can allow granular control over different levels of access (such as types of S3 API actions, like GET, PUT, or LIST) for the bucket itself.

Bucket ACL: Attached to the bucket, an access control list (ACL) allows coarse-grained control over bucket access. ACLs are designed to easily share a bucket with a large group or anonymously when a need for read, write, or full control permissions over the bucket arises.

Both policy types allow for much better control over access to a bucket than does using an ACL.

Example 4-2 demonstrates a policy that allows all S3 actions over the bucket called everyonelovesaws from the 192.168.100.0/24 CIDR range.

Example 4-2 S3 Policy with a Source IP Condition

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": "*",

"Action": "s3:*",

"Resource": "arn:aws:s3:::everyonelovesaws/*",

"Condition": {

"IpAddress": {"aws:SourceIp": "192.168.100.0/24"},

}

}

]

}

On top of access control to the data, you also need to consider the security of data during transit and at rest by applying encryption. To encrypt data being sent to the S3 bucket, you can either use client-side encryption or make sure to use the TLS S3 endpoint. (Chapter 1 covers encryption in transit in detail.) To encrypt data at rest, you have three options in S3:

S3 Server-Side Encryption (SSE-S3): SSE-S3 provides built-in encryption with an AWS managed encryption key. This service is available on an S3 bucket at no additional cost.

S3 SSE-KMS: SSE-KMS protects data by using a KMS-managed encryption key. This option gives you more control over the encryption keys to be used in S3, but the root encryption key of the KMS service is still AWS managed.

S3 SSE-C: With the SSE-C option, S3 is configured with server-side encryption that uses a customer-provided encryption key. The encryption key is provided by the client within the request, and so each blob of data that is delivered to S3 is seamlessly encrypted with the customer-provided key. When the encryption is complete, S3 discards the encryption key so the only way to decrypt the data is to provide the same key when retrieving the object.