- "Do I Know This Already?" Quiz

- Foundation Topics

- Exam Preparation Tasks

Foundation Topics

Security Fundamentals

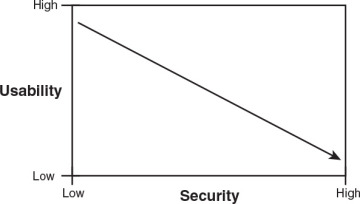

Security is about finding a balance, as all systems have limits. No one person or company has unlimited funds to secure everything, and we cannot always take the most secure approach. One way to secure a system from network attack is to unplug it and make it a standalone system. Although this system would be relatively secure from Internet-based attackers, its usability would be substantially reduced. The opposite approach of plugging it in directly to the Internet without any firewall, antivirus, or security patches would make it extremely vulnerable, yet highly accessible. So, here again, you see that the job of security professionals is to find a balance somewhere between security and usability. Figure 1-1 demonstrates this concept. What makes this so tough is that companies face many more different challenges today than in the past. Whereas many businesses used to be bricks and mortar, they are now bricks and clicks. Modern businesses face many challenges, such as the increased sophistication of cyber criminals and the evolution of advanced persistent threats.

Figure 1-1 Security Versus Usability

To find this balance and meet today’s challenges, you need to know what the goals of the organization are, what security is, and how to measure the threats to security. The next section discusses the goals of security.

Goals of Security

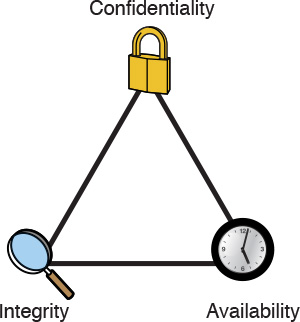

There are many ways in which security can be achieved, but it’s universally agreed that the security triad of confidentiality, integrity, and availability (CIA) form the basic building blocks of any good security initiative.

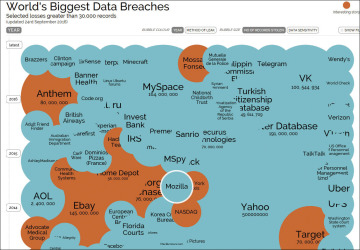

Confidentiality addresses the secrecy and privacy of information. Physical examples of confidentiality include locked doors, armed guards, and fences. In the logical world, confidentiality must protect data in storage and in transit. For a real-life example of the failure of confidentiality, look no further than the recent news reports that have exposed how several large-scale breaches in confidentiality were the fault of corporations, such as Yahoo’s loss of a billion passwords that occurred in the 2012 and 2013 timeframe and was reported in 2016 or the August 2016 revelation that more than 68 million Dropbox users had their usernames and passwords compromised in 2012. The graphic shown in Figure 1-2 from www.informationisbeautiful.net shows the scope of security breaches over the past several years. It offers a few examples of the scope of personally identifiable information (PII) that has been exposed.

Figure 1-2 World’s Biggest Data Breaches as of September 2016

Integrity is the second piece of the CIA security triad. Integrity provides for the correctness of information. It allows users of information to have confidence in its correctness. Correctness doesn’t mean that the data is accurate, just that it hasn’t been modified in storage or transit. Integrity can apply to paper or electronic documents. It is much easier to verify the integrity of a paper document than an electronic one. Integrity in electronic documents and data is much more difficult to protect than in paper ones. Integrity must be protected in two modes: storage and transit.

Information in storage can be protected if you use access and audit controls. Cryptography can also protect information in storage through the use of hashing algorithms. Real-life examples of this technology can be seen in programs such as Tripwire, MD5Sum, and Windows Resource Protection (WRP). Integrity in transit can be ensured primarily by the protocols used to transport the data. These security controls include hashing and cryptography.

Availability is the third leg of the CIA triad. Availability simply means that when a legitimate user needs the information, it should be available. As an example, access to a backup facility 24×7 does not help if there are no updated backups from which to restore. Similarly, cloud storage is of no use if the cloud provider is down. Service-level agreements (SLA) are one way availability can be ensured, and backups are another. Backups provide a copy of critical information should files and data be destroyed or equipment fail. Failover equipment is another way to ensure availability. Systems such as redundant array of inexpensive disks (RAID) and services such as redundant sites (hot, cold, and warm) are two other examples. Disaster recovery is tied closely to availability, as it’s all about getting critical systems up and running quickly. Denial of service (DoS) is an attack against availability. Figure 1-3 shows an example of the CIA triad.

Figure 1-3 The CIA Triad

Risk, Assets, Threats, and Vulnerabilities

As with any new technology topic, to better understand the security field, you must learn the terminology that is used. To be a security professional, you need to understand the relationship between risk, threats, assets, and vulnerabilities.

Risk is the probability or likelihood of the occurrence or realization of a threat. There are three basic elements of risk: assets, threats, and vulnerabilities. To deal with risk, the U.S. federal government has adopted a risk management framework (RMF). The RMF process is based on the key concepts of mission- and risk-based, cost-effective, and enterprise information system security. NIST Special Publication 800-37, “Guide for Applying the Risk Management Framework to Federal Information Systems,” transforms the traditional Certification and Accreditation (C&A) process into the six-step Risk Management Framework (RMF). Let’s look at the various components that are associated with risk, which include assets, threats, and vulnerabilities.

An asset is any item of economic value owned by an individual or corporation. Assets can be real—such as routers, servers, hard drives, and laptops—or assets can be virtual, such as formulas, databases, spreadsheets, trade secrets, and processing time. Regardless of the type of asset discussed, if the asset is lost, damaged, or compromised, there can be an economic cost to the organization.

A threat sets the stage for risk and is any agent, condition, or circumstance that could potentially cause harm, loss, or damage, or compromise an IT asset or data asset. From a security professional’s perspective, threats can be categorized as events that can affect the confidentiality, integrity, or availability of the organization’s assets. These threats can result in destruction, disclosure, modification, corruption of data, or denial of service. Examples of the types of threats an organization can face include the following:

Natural disasters, weather, and catastrophic damage: Hurricanes, such as Matthew (which hit Florida and the U.S. East Coast in 2016), storms, weather outages, fire, flood, earthquakes, and other natural events compose an ongoing threat.

Hacker attacks: An insider or outsider who is unauthorized and purposely attacks an organization’s components, systems, or data.

Cyberattack: Attackers who target critical national infrastructures such as water plants, electric plants, gas plants, oil refineries, gasoline refineries, nuclear power plants, waste management plants, and so on. Stuxnet is an example of one such tool designed for just such a purpose.

Viruses and malware: An entire category of software tools that are malicious and are designed to damage or destroy a system or data. Cryptowall and Sality are two example of malware.

Disclosure of confidential information: Anytime a disclosure of confidential information occurs, it can be a critical threat to an organization if that disclosure causes loss of revenue, causes potential liabilities, or provides a competitive advantage to an adversary.

Denial of service (DoS) or distributed DoS (DDoS) attacks: An attack against availability that is designed to bring the network or access to a particular TCP/IP host/server to its knees by flooding it with useless traffic. Today, most DoS attacks are launched via botnets, whereas in the past tools such as the Ping of Death or Teardrop may have been used. Like malware, hackers constantly develop new tools so that Storm and Mariposa are replaced with other more current threats.

A vulnerability is a weakness in the system design, implementation, software, or code, or the lack of a mechanism. A specific vulnerability might manifest as anything from a weakness in system design to the implementation of an operational procedure. Vulnerabilities might be eliminated or reduced by the correct implementation of safeguards and security countermeasures.

Vulnerabilities and weaknesses are common mainly because there isn’t any perfect software or code in existence. Vulnerabilities can be found in each of the following:

Applications: Software and applications come with tons of functionality. Applications may be configured for usability rather than for security. Applications may be in need of a patch or update that may or may not be available. Attackers targeting applications have a target-rich environment to examine. Just think of all the applications running on your home or work computer.

Operating systems: This operating system software is loaded in workstations and servers. Attacks can search for vulnerabilities in operating systems that have not been patched or updated.

Misconfiguration: The configuration file and configuration setup for the device or software may be misconfigured or may be deployed in an unsecure state. This might be open ports, vulnerable services, or misconfigured network devices. Just consider wireless networking. Can you detect any wireless devices in your neighborhood that have encryption turned off?

Shrinkwrap software: The application or executable file that is run on a workstation or server. When installed on a device, it can have tons of functionality or sample scripts or code available.

Vulnerabilities are not the only concern the ethical hacker will have. Ethical hackers must also understand how to protect data. One way to protect data is through backup.

Backing Up Data to Reduce Risk

One way to reduce risk is by backing up data. While backups won’t prevent problems such as ransomware, they can help mitigate the threat. The method your organization chooses depends on several factors:

How often should backups occur?

How much data must be backed up?

How will backups be stored and transported offsite?

How much time do you have to perform the backup each day?

The following are the three types of backup methods. Each backup method has benefits and drawbacks. Full backups take the longest time to create, whereas incremental backups take the least.

Full backups: During a full backup, all data is backed up, and no files are skipped or bypassed; you simply designate which server to back up. A full backup takes the longest to perform and the least time to restore when compared to differential or incremental backups because only one set of tapes is required.

Differential backups: Using differential backup, a full backup is typically done once a week and a daily differential backup is completed that copies all files that have changed since the last full backup. If you need to restore, you need the last full backup and the most recent differential backup.

Incremental backups: This backup method works by means of a full backup scheduled for once a week, and only files that have changed since the previous full backup or previous incremental backup are backed up each day. This is the fastest backup option, but it takes the longest to restore. Incremental backups are unlike differential backups. When files are copied, the archive bit is reset; therefore, incremental backups back up only changes made since the last incremental backup.

Defining an Exploit

An exploit refers to a piece of software, a tool, a technique, or a process that takes advantage of a vulnerability that leads to access, privilege escalation, loss of integrity, or denial of service on a computer system. Exploits are dangerous because all software has vulnerabilities; hackers and perpetrators know that there are vulnerabilities and seek to take advantage of them. Although most organizations attempt to find and fix vulnerabilities, some organizations lack sufficient funds for securing their networks. Sometimes you may not even know the vulnerability exists, and that is known as zero day exploit. Even when you do know there is a problem, you are burdened with the fact that a window exists between when a vulnerability is discovered and when a patch is available to prevent the exploit. The more critical the server, the slower it is usually patched. Management might be afraid of interrupting the server or afraid that the patch might affect stability or performance. Finally, the time required to deploy and install the software patch on production servers and workstations exposes an organization’s IT infrastructure to an additional period of risk.

Risk Assessment

A risk assessment is a process to identify potential security hazards and evaluate what would happen if a hazard or unwanted event were to occur. There are two approaches to risk assessment: qualitative and quantitative. Qualitative risk assessment methods use scenarios to drive a prioritized list of critical concerns and do not focus on dollar amounts. Example impacts might be identified as critical, high, medium, or low. Quantitative risk assessment assigns a monetary value to the asset. It then uses the anticipated exposure to calculate a dollar cost. These steps are as follows:

Step 1. Determine the single loss expectancy (SLE): This step involves determining the single amount of loss you could incur on an asset if a threat becomes realized or the amount of loss you expect to incur if the asset is exposed to the threat one time. SLE is calculated as follows: SLE = asset value × exposure factor. The exposure factor (EF) is the subjective, potential portion of the loss to a specific asset if a specific threat were to occur.

Step 2. Evaluate the annual rate of occurrence (ARO): The purpose of evaluating the ARO is to determine how often an unwanted event is likely to occur on an annualized basis.

Step 3. Calculate the annual loss expectancy (ALE): This final step of the quantitative assessment seeks to combine the potential loss and rate per year to determine the magnitude of the risk. This is expressed as annual loss expectancy (ALE), which is calculated as follows: ALE = SLE × ARO.

CEH exam questions might ask you to use the SLE and ALE risk formulas. As an example, a question might ask, “If you have data worth $500 that has an exposure factor of 50 percent due to lack of countermeasures such as antivirus, what would the SLE be?” You would use the following formula to calculate the answer:

SLE × EF = SLE, or $500 × .50 = $250

As part of a follow-up test question, could you calculate the annualized loss expectance (ALE) if you knew that this type of event typically happened four times a year? Yes, as this would mean the ARO is 4. Therefore:

ALE = SLE × ARO or $250 × 4 = $1,000

This means that, on average, the loss is $1,000 per year.

Because the organization cannot provide complete protection for all of its assets, a system must be developed to rank risk and vulnerabilities. Organizations must seek to identify high-risk and high-impact events for protective mechanisms. Part of the job of an ethical hacker is to identify potential vulnerabilities to these critical assets, determine potential impact, and test systems to see whether they are vulnerable to exploits while working within the boundaries of laws and regulations.

Security Testing

Security testing is the primary job of ethical hackers. These tests might be configured in such way that the ethical hackers have no knowledge, full knowledge, or partial knowledge of the target of evaluation (TOE).

The goal of the security test (regardless of type) is for the ethical hacker to test the TOE’s security controls and evaluate and measure its potential vulnerabilities.

No-Knowledge Tests (Black Box)

No-knowledge testing is also known as black box testing. Simply stated, the security team has no knowledge of the target network or its systems. Black box testing simulates an outsider attack, as outsiders usually don’t know anything about the network or systems they are probing. The attacker must gather all types of information about the target to begin to profile its strengths and weaknesses. The advantages of black box testing include the following:

The test is unbiased because the designer and the tester are independent of each other.

The tester has no prior knowledge of the network or target being examined. Therefore, there are no preconceptions about the function of the network.

A wide range of reconnaissance work is usually done to footprint the organization, which can help identify information leakage.

The test examines the target in much the same way as an external attacker.

The disadvantages of black box testing include the following:

Performing the security tests can take more time than partial- or full-knowledge testing.

It is usually more expensive because it takes more time to perform.

It focuses only on what external attackers see, whereas in reality many attacks are launched by insiders.

Full-Knowledge Testing (White Box)

White box testing takes the opposite approach of black box testing. This form of security test takes the premise that the security tester has full knowledge of the network, systems, and infrastructure. This information allows the security tester to follow a more structured approach and not only review the information that has been provided but also verify its accuracy. So, although black box testing will usually spend more time gathering information, white box testing will spend that time probing for vulnerabilities.

Partial-Knowledge Testing (Gray Box)

In the world of software testing, gray box testing is described as a partial-knowledge test. EC-Council literature describes gray box testing as a form of internal test. Therefore, the goal is to determine what insiders can access. This form of test might also prove useful to the organization because so many attacks are launched by insiders.

Types of Security Tests

Several different types of security tests can be performed. These can range from those that merely examine policy to those that attempt to hack in from the Internet and mimic the activities of true hackers. These security tests are also known by many names, including the following:

Vulnerability testing

Network evaluations

Red-team exercises

Penetration testing

Host vulnerability assessment

Vulnerability assessment

Ethical hacking

No matter what the security test is called, it is carried out to make a systematic examination of an organization’s network, policies, and security controls. Its purpose is to determine the adequacy of security measures, identify security deficiencies, provide data from which to predict the effectiveness of potential security measures, and confirm the adequacy of such measures after implementation. Security tests can be defined as one of three types:

High-level assessment/audit: Also called a level I assessment, it is a top-down look at the organization’s policies, procedures, and guidelines. This type of vulnerability assessment or audit does not include any hands-on testing. The purpose of a top-down assessment is to answer three questions:

Do the applicable policies, procedures, and guidelines exist?

Are they being followed?

Is their content sufficient to guard against potential risk?

Network evaluation: Also called a level II assessment, it has all the elements specified in a level I assessment, and it includes hands-on activities. These hands-on activities include information gathering, scanning, vulnerability-assessment scanning, and other hands-on activities. Throughout this book, tools and techniques used to perform this type of assessment are discussed.

Penetration test: Unlike assessments and evaluations, penetration tests are adversarial in nature. Penetration tests are also referred to as level III assessments. These events usually take on an adversarial role and look to see what the outsider can access and control. Penetration tests are less concerned with policies and procedures and are more focused on finding low-hanging fruit and seeing what a hacker can accomplish on this network. This book offers many examples of the tools and techniques used in penetration tests.

Just remember that penetration tests are not fully effective if an organization does not have the policies and procedures in place to control security. Without adequate policies and procedures, it’s almost impossible to implement real security. Documented controls are required. If none are present, you should evaluate existing practices.

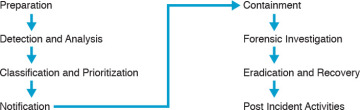

Security policies are the foundation of the security infrastructure. There can be many different types of polices, such as access control, password, user account, email, acceptable use, and incident response. As an example, an incident response plan consists of actions to be performed in responding to and recovering from incidents. There are several slightly different approaches to incident response. The EC-Council approach to incident response follows the steps shown in Figure 1-4.

Figure 1-4 The Incident Response Process

You might be tasked with building security policies based on existing activities and known best practices. Good and free resources for accomplishing such a task are the SANS policy templates, available at http://www.sans.org/security-resources/policies/. How do ethical hackers play a role in these tests? That’s the topic of the next section.

Hacker and Cracker Descriptions

To understand your role as an ethical hacker, it is important to know the players. Originally, the term hacker was used for a computer enthusiast. A hacker was a person who enjoyed understanding the internal workings of a system, computer, and computer network. Over time, the popular press began to describe hackers as individuals who broke into computers with malicious intent. The industry responded by developing the word cracker, which is short for criminal hacker. The term cracker was developed to describe individuals who seek to compromise the security of a system without permission from an authorized party. With all this confusion over how to distinguish the good guys from the bad guys, the term ethical hacker was coined. An ethical hacker is an individual who performs security tests and other vulnerability-assessment activities to help organizations secure their infrastructures. Sometimes ethical hackers are referred to as white hat hackers.

Hacker motives and intentions vary. Some hackers are strictly legitimate, whereas others routinely break the law. Let’s look at some common categories:

White hat hackers: These individuals perform ethical hacking to help secure companies and organizations. Their belief is that you must examine your network in the same manner as a criminal hacker to better understand its vulnerabilities.

Black hat hackers: These individuals perform illegal activities.

Gray hat hackers: These individuals usually follow the law but sometimes venture over to the darker side of black hat hacking. It would be unethical to employ these individuals to perform security duties for your organization because you are never quite clear where they stand. Think of them as the character of Luke in Star Wars. While wanting to use the force of good, he is also drawn to the dark side.

Suicide hackers: These are individuals that may carry out an attack even if they know there is a high chance that they will get caught and serve a long prison term.

Hackers usually follow a fixed methodology that includes the following steps:

Reconnaissance and footprinting: Can be both passive and active.

Scanning and enumeration: Can include the use of port scanning tools and network mappers.

Gaining access: The entry point into the network, application, or system.

Maintaining access: Techniques used to maintain control, such as escalation of privilege.

Covering tracks: Planting rootkits, backdoors, and clearing logs are activities normally performed at this step.

Now let’s turn our attention to who these attackers are and what security professionals are up against.

Who Attackers Are

Ethical hackers are up against several types of individuals in the battle to secure the network. The following list presents some of the more commonly used terms for these attackers:

Phreakers: The original hackers. These individuals hacked telecommunication and PBX systems to explore the capabilities and make free phone calls. Their activities include physical theft, stolen calling cards, access to telecommunication services, reprogramming of telecommunications equipment, and compromising user IDs and passwords to gain unauthorized use of facilities, such as phone systems and voicemail.

Script kiddies: A term used to describe often younger attackers who use widely available freeware vulnerability-assessment tools and hacking tools that are designed for attacking purposes only. These attackers usually do not have programming or hacking skills and, given the techniques used by most of these tools, can be defended against with the proper security controls and risk-mitigation strategies.

Disgruntled employees: Employees who have lost respect and integrity for the employer. These individuals might or might not have more skills than the script kiddie. Many times, their rage and anger blind them. They rank as a potentially high risk because they have insider status, especially if access rights and privileges were provided or managed by the individual.

Software crackers/hackers: Individuals who have skills in reverse engineering software programs and, in particular, licensing registration keys used by software vendors when installing software onto workstations or servers. Although many individuals are eager to partake of their services, anyone who downloads programs with cracked registration keys is breaking the law and can be a greater potential risk and subject to malicious code and malicious software threats that might have been injected into the code.

Cyberterrorists/cybercriminals: An increasing category of threat that can be used to describe individuals or groups of individuals who are usually funded to conduct clandestine or espionage activities on governments, corporations, and individuals in an unlawful manner. These individuals are typically engaged in sponsored acts of defacement: DoS/DDoS attacks, identity theft, financial theft, or worse, compromising critical infrastructures in countries, such as nuclear power plants, electric plants, water plants, and so on. These attacks may take months or years and are described as advanced persistent threats (APT).

System crackers/hackers: Elite hackers who have specific expertise in attacking vulnerabilities of systems and networks by targeting operating systems. These individuals get the most attention and media coverage because of the globally affected malware, botnets, and Trojans that are created by system crackers/hackers. System crackers/hackers perform interactive probing activities to exploit security defects and security flaws in network operating systems and protocols.

Now that you have an idea who the adversary is, let’s briefly discuss ethical hackers.

Ethical Hackers

Ethical hackers perform penetration tests. They perform the same activities a hacker would but without malicious intent. They must work closely with the host organization to understand what the organization is trying to protect, who they are trying to protect these assets from, and how much money and resources the organization is willing to expend to protect the assets.

By following a methodology similar to that of an attacker, ethical hackers seek to see what type of public information is available about the organization. Information leakage can reveal critical details about an organization, such as its structure, assets, and defensive mechanisms. After the ethical hacker gathers this information, it is evaluated to determine whether it poses any potential risk. The ethical hacker further probes the network at this point to test for any unseen weaknesses.

Penetration tests are sometimes performed in a double-blind environment. This means that the internal security team has not been informed of the penetration test. This serves an important purpose, allowing management to gauge the security team’s responses to the ethical hacker’s probing and scanning. Did they notice the probes, or have the attempted attacks gone unnoticed?

Now that the activities performed by ethical hackers have been described, let’s spend some time discussing the skills that ethical hackers need, the different types of security tests that ethical hackers perform, and the ethical hacker rules of engagement.

Required Skills of an Ethical Hacker

Ethical hackers need hands-on security skills. Although you do not have to be an expert in everything, you should have an area of expertise. Security tests are usually performed by teams of individuals, where each individual has a core area of expertise. These skills include the following:

Routers: Knowledge of routers, routing protocols, and access control lists (ACLs). Certifications such as Cisco Certified Network Associate (CCNA) and Cisco Certified Internetworking Expert (CCIE) can be helpful.

Microsoft: Skills in the operation, configuration, and management of Microsoft-based systems. These can run the gamut from Windows 7 to Windows Server 2012. These individuals might be Microsoft Certified Solutions Associate (MCSA) or Microsoft Certified Solutions Expert (MCSE) certified.

Linux: A good understanding of the Linux/UNIX OS. This includes security setting, configuration, and services such as Apache. These individuals may be Fedora or Linux+ certified.

Firewalls: Knowledge of firewall configuration and the operation of intrusion detection systems (IDS) and intrusion prevention systems (IPS) can be helpful when performing a security test. Individuals with these skills may be certified as a Cisco Certified Network Associate Security Professional (CCNA) or Check Point Certified Security Administrator (CCSA).

Programming: Knowledge of programming, including SQL, programming languages such as C++, Ruby, C#, and C, and scripting languages such as PHP and Java.

Mainframes: Although mainframes do not hold the position of dominance they once had in business, they still are widely used. If the organization being assessed has mainframes, the security teams would benefit from having someone with that skill set on the team.

Network protocols: Most modern networks are Transmission Control Protocol/Internet Protocol (TCP/IP). Someone with good knowledge of networking protocols, as well as how these protocols function and can be manipulated, can play a key role in the team. These individuals may possess certifications in other operating systems or hardware or may even possess a CompTIA Network+, Security+, or Advanced Security Practitioner (CASP) certification.

Project management: Someone will have to lead the security test team, and if you are chosen to be that person, you will need a variety of the skills and knowledge types listed previously. It can also be helpful to have good project management skills. The parameters of a project are typically time, scope, and cost. After all, you will be defining the project scope when leading a pen test team. Individuals in this role may benefit from having Project Management Professional (PMP) certification.

On top of all this, ethical hackers need to have good report writing skills and must always try to stay abreast of current exploits, vulnerabilities, and emerging threats, as their goal is to stay a step ahead of malicious hackers.

Modes of Ethical Hacking

With all this talk of the skills that an ethical hacker must have, you might be wondering how the ethical hacker can put these skills to use. An organization’s IT infrastructure can be probed, analyzed, and attacked in a variety of ways. Some of the most common modes of ethical hacking are described here:

Information gathering: This testing technique seeks to see what type of information is leaked by the company and how an attack might leverage this information.

External penetration testing: This ethical hack seeks to simulate the types of attacks that could be launched across the Internet. It could target Hypertext Transfer Protocol (HTTP), Simple Mail Transfer Protocol (SMTP), Structured Query Language (SQL), or any other available service.

Internal penetration testing: This ethical hack simulates the types of attacks and activities that could be carried out by an authorized individual with a legitimate connection to the organization’s network.

Network gear testing: Firewall, IDS, router, and switches.

DoS testing: This testing technique can be used to stress test systems or to verify their ability to withstand a DoS attack.

Wireless network testing: This testing technique looks at wireless systems. This might include wireless networking systems, RFID, ZigBee, Bluetooth, or any wireless device.

Application testing: Application testing is designed to examine input controls and how data is processed. All areas of the application may be examined.

Social engineering: Social engineering attacks target the organization’s employees and seek to manipulate them to gain privileged information. Employee training, proper controls, policies, and procedures can go a long way in defeating this form of attack.

Physical security testing: This simulation seeks to test the organization’s physical controls. Systems such as doors, gates, locks, guards, closed circuit television (CCTV), and alarms are tested to see whether they can be bypassed.

Authentication system testing: This simulated attack is tasked with assessing authentication controls. If the controls can be bypassed, the ethical hacker might probe to see what level of system control can be obtained.

Database testing: This testing technique is targeted toward SQL servers.

Communication system testing: This testing technique examines communications such as PBX, Voice over IP (VoIP), modems, and voice communication systems.

Stolen equipment attack: This simulation is closely related to a physical attack because it targets the organization’s equipment. It could seek to target the CEO’s laptop or the organization’s backup tapes. No matter what the target, the goal is the same: extract critical information, usernames, and passwords.

Every ethical hacker must abide by the following rules when performing the tests described previously. If not, bad things can happen to you, which might include loss of job, civil penalty, or even jail time:

Never exceed the limits of your authorization: Every assignment will have rules of engagement. This document includes not only what you are authorized to target but also the extent that you are authorized to control such system. If you are only authorized to obtain a prompt on the target system, downloading passwords and starting a crack on these passwords would be in excess of what you have been authorized to do.

Protect yourself by setting up damage limitations: There has to be a nondisclosure agreement (NDA) between the client and the tester to protect them both. You should also consider liability insurance and an errors and omissions policy. Items such as the NDA, rules of engagement, project scope, and resumes of individuals on the penetration testing team may all be bundled together for the client into one package.

Be ethical: That’s right; the big difference between a hacker and an ethical hacker is the word ethics. Ethics is a set of moral principles about what is correct or the right thing to do. Ethical standards sometimes differ from legal standards in that laws define what we must do or not do, whereas ethics define what we should do or not do.

Maintain confidentiality: During security evaluations, you will likely be exposed to many types of confidential information. You have both a legal and a moral duty to treat this information with the utmost privacy. You should not share this information with third parties and should not use it for any unapproved purposes. There is an obligation to protect the information sent between the tester and the client. This has to be specified in an NDA.

Do no harm: It’s of utmost importance that you do no harm to the systems you test. Again, a major difference between a hacker and an ethical hacker is that an ethical hacker should do no harm. Misused security tools can lock out critical accounts, cause denial of service, and crash critical servers or applications. Take care to prevent these events unless that is the goal of the test.

Test Plans—Keeping It Legal

Most of us make plans before we take a big trip or vacation. We think about what we want to see, how we plan to spend our time, what activities are available, and how much money we can spend and not regret it when the next credit card bill arrives. Ethical hacking is much the same minus the credit card bill. Many details need to be worked out before a single test is performed. If you or your boss is tasked with managing this project, some basic questions need to be answered, such as what’s the scope of the assessment, what are the driving events, what are the goals of the assessment, what will it take to get approval, and what’s needed in the final report.

Before an ethical hacking test can begin, the scope of the engagement must be determined. Defining the scope of the assessment is one of the most important parts of the ethical hacking process. At some point, you will be meeting with management to start the discussions of the how and why of the ethical hack. Before this meeting ever begins, you will probably have some idea what management expects this security test to accomplish. Companies that decide to perform ethical hacking activities don’t do so in a vacuum. You need to understand the business reasons behind this event. Companies can decide to perform these tests for various reasons. The most common reasons include the following:

A breach in security: One or more events have occurred that highlight a lapse in security. It could be that an insider was able to access data that should have been unavailable, or it could be that an outsider was able to hack the organization’s web server.

Compliance with state, federal, regulatory, or other law or mandate: Compliance with state or federal laws is another event that might be driving the assessment. Companies can face huge fines and executives can face potential jail time if they fail to comply with state and federal laws. The Gramm-Leach-Bliley Act (GLBA), Sarbanes-Oxley (SOX), and Health Insurance Portability and Accountability Act (HIPAA) are three such laws. SOX requires accountability for public companies relating to financial information. HIPAA requires organizations to perform a vulnerability assessment. Your organization might decide to include ethical hacking into this test regime. One such standard that the organization might be attempting to comply with is ISO/IEC 27002. This information security standard was first published in December 2000 by the International Organization for Standardization and the International Electrotechnical Commission. This code of practice for information security management is considered a security standard benchmark and includes the following 14 main elements:

Information Security Policies

Organization of Information Security

Human Resource Security

Asset Management

Access Control

Cryptography

Physical and environmental security

Operation security

Communication security

System acquisition, development, and maintenance

Supplier relationships

Information security incident management

Information security aspects of business continuity management

Compliance

Due diligence: Due diligence is another reason a company might decide to perform a pen test. The new CEO might want to know how good the organization’s security systems really are, or it could be that the company is scheduled to go through a merger or is acquiring a new firm. If so, the pen test might occur before the purchase or after the event. These assessments are usually going to be held to a strict timeline. There is only a limited amount of time before the purchase, and if performed afterward, the organization will probably be in a hurry to integrate the two networks as soon as possible.

Test Phases

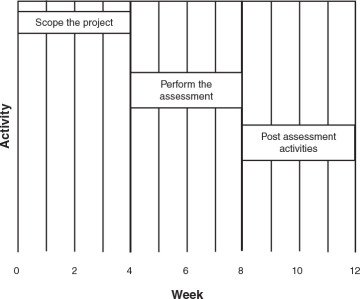

Security assessments in which ethical hacking activities will take place are composed of three phases: scoping the project, in which goals and guidelines are established, performing the assessment, and performing post-assessment activities, including the report and remediation activities. Figure 1-5 shows the three phases of the assessment and their typical times.

Figure 1-5 Ethical hacking phases and times.

Establishing Goals

The need to establish goals is critical. Although you might be ready to jump in and begin hacking, a good plan will detail the goals and objectives of the test. Common goals include system certification and accreditation, verification of policy compliance, and proof that the IT infrastructure has the capability to defend against technical attacks.

Are the goals to certify and accredit the systems being tested? Certification is a technical evaluation of the system that can be carried out by independent security teams or by the existing staff. Its goal is to uncover any vulnerabilities or weaknesses in the implementation. Your goal will be to test these systems to make sure that they are configured and operating as expected, that they are connected to and communicate with other systems in a secure and controlled manner, and that they handle data in a secure and approved manner.

If the goals of the penetration test are to determine whether current policies are being followed, the test methods and goals might be somewhat different. The security team will be looking at the controls implemented to protect information being stored, being transmitted, or being processed. This type of security test might not have as much hands-on hacking but might use more social engineering techniques and testing of physical controls. You might even direct one of the team members to perform a little dumpster diving.

The goal of a technical attack might be to see what an insider or outsider can access. Your goal might be to gather information as an outsider and then use that data to launch an attack against a web server or externally accessible system.

Regardless of what type of test you are asked to perform, you can ask some basic questions to help establish the goals and objectives of the tests, including the following:

What is the organization’s mission?

What specific outcomes does the organization expect?

What is the budget?

When will tests be performed: during work hours, after hours, on weekends?

How much time will the organization commit to completing the security evaluation?

Will insiders be notified?

Will customers be notified?

How far will the test proceed? Root the box, gain a prompt, or attempt to retrieve another prize, such as the CEO’s password?

Whom do you contact should something go wrong?

What are the deliverables?

What outcome is management seeking from these tests?

Getting Approval

Getting approval is a critical event in the testing process. Before any testing actually begins, you need to make sure that you have a plan that has been approved in writing. If this is not done, you and your team might face unpleasant consequences, which might include being fired or even facing criminal charges.

If you are an independent consultant, you might also get insurance before starting any type of test. Umbrella policies and those that cover errors and omissions are commonly used in the field. These types of liability policies can help protect you should anything go wrong.

To help make sure that the approval process goes smoothly, ensure that someone is the champion of this project. This champion or project sponsor is the lead contact to upper management and your contact person. Project sponsors can be instrumental in helping you gain permission to begin testing and to provide you with the funding and materials needed to make this a success.

Ethical Hacking Report

Although you have not actually begun testing, you do need to start thinking about the final report. Throughout the entire process, you should be in close contact with management to keep them abreast of your findings. There shouldn’t be any big surprises when you submit the report. While you might have found some serious problems, they should be discussed with management before the report is written and submitted. The goal is to keep management in the loop and advised of the status of the assessment. If you find items that present a critical vulnerability, stop all tests and immediately inform management. Your priority should always be the health and welfare of the organization.

The report itself should detail the results of what was found. Vulnerabilities should be discussed, as should the potential risk they pose. Although people aren’t fired for being poor report writers, don’t expect to be promoted or praised for your technical findings if the report doesn’t communicate your findings clearly. The report should present the results of the assessment in an easily understandable and fully traceable way. The report should be comprehensive and self-contained. Most reports contain the following sections:

Introduction

Statement of work performed

Results and conclusions

Recommendations

Because most companies are not made of money and cannot secure everything, rank your recommendations so that the ones with the highest risk/highest probability appear at the top of the list.

The report needs to be adequately secured while in electronic storage. Use encryption. The printed copy of the report should be marked Confidential, and while it is in its printed form, take care to protect the report from unauthorized individuals. You have an ongoing responsibility to ensure the safety of the report and all information gathered. Most consultants destroy reports and all test information after a contractually obligated period of time.

Vulnerability Research—Keeping Up with Changes

If you are moving into the IT security field or are already working in IT security, you probably already know how quickly things change in this industry. That pace of change requires the security professional to keep abreast of new/developing tools, techniques, and emerging vulnerabilities. Although someone involved in security in the 1990s might know about Code Red or Nimda, that will do little good to combat ransomware or a Java watering hole attack. Because tools become obsolete and exploits become outdated, you want to build up a list of websites that you can use to keep up with current vulnerabilities. The sites listed here are but a few you should review:

National Vulnerability Database: http://nvd.nist.gov/

Security Tracker: http://securitytracker.com/

HackerWatch: http://www.hackerwatch.org/

Dark Reading: http://www.darkreading.com/

Exploit Database: http://www.exploit-db.com/

HackerStorm: http://hackerstorm.co.uk/

SANS Reading Room: http://www.sans.org/reading_room/

SecurityFocus: http://www.securityfocus.com/

Ethics and Legality

The word ethics is derived from the Greek word ethos (character) and from the Latin word mores (customs). Laws and ethics are much different in that ethics cover the gray areas that laws do not always address. Most professions, including EC-Council, have highly detailed and enforceable codes of ethics for their members. Some examples of IT organizations that have codes of ethics include

EC-Council: https://www.eccouncil.org/code-of-ethics

ISACA: http://www.isaca.org/Certification/Code-of-Professional-Ethics/Pages/default.aspx

To become a CEH, you must have a good understanding of ethical standards because you might be presented with many ethical dilemmas during your career. You can also expect to see several questions relating to ethics on the CEH exam.

Recent FBI reports on computer crime indicate that unauthorized computer use has continued to climb. A simple review of the news on any single day usually indicates reports of a variety of cybercrime and network attacks. Hackers use computers as a tool to commit a crime or to plan, track, and control a crime against other computers or networks. Your job as an ethical hacker is to find vulnerabilities before the attackers do and help prevent the attackers from carrying out malicious activities. Tracking and prosecuting hackers can be a difficult job because international law is often ill-suited to deal with the problem. Unlike conventional crimes that occur in one location, hacking crimes might originate in India, use a system based in Singapore, and target a computer network located in Canada. Each country has conflicting views on what constitutes cybercrime. Even if hackers can be punished, attempting to prosecute them can be a legal nightmare. It is hard to apply national borders to a medium such as the Internet that is essentially borderless.

Overview of U.S. Federal Laws

Although some hackers might have the benefit of bouncing around the globe from system to system, your work will likely occur within the confines of the host nation. The United States and some other countries have instigated strict laws to deal with hackers and hacking. During the past 10 to 15 years, the U.S. federal government has taken a much more active role in dealing with computer crime, Internet activity, privacy, corporate threats, vulnerabilities, and exploits. These are laws you should be aware of and not become entangled in. Hacking is covered under the U.S. Code Title 18: Crimes and Criminal Procedure: Part 1: Crimes: Chapter 47: Fraud and False Statements: Sections 1029 and 1030. Each section is described here:

Section 1029, Fraud and related activity with access devices: This law gives the U.S. federal government the power to prosecute hackers who knowingly and with intent to defraud produce, use, or traffic in one or more counterfeit access devices. Access devices can be an application or hardware that is created specifically to generate any type of access credentials, including passwords, credit card numbers, long-distance telephone service access codes, PINs, and so on for the purpose of unauthorized access.

Section 1030, Fraud and related activity in connection with computers: The law covers just about any computer or device connected to a network or Internet. It mandates penalties for anyone who accesses a computer in an unauthorized manner or exceeds one’s access rights. This is a powerful law because companies can use it to prosecute employees when they use the capability and access that companies have given them to carry out fraudulent activities.

The punishment described in Sections 1029 and 1030 for hacking into computers ranges from a fine or imprisonment for no more than 1 year up to a fine and imprisonment for no more than 20 years. This wide range of punishment depends on the seriousness of the criminal activity and what damage the hacker has done and whether you are a repeat offender. Other federal laws that address hacking include the following:

Electronic Communication Privacy Act: Mandates provisions for access, use, disclosure, interception, and privacy protections of electronic communications. The law encompasses U.S. Code Sections 2510 and 2701. According to the U.S. Code, electronic communications “means any transfer of signs, signals, writing, images, sounds, data, or intelligence of any nature transmitted in whole or in part by a wire, radio, electromagnetic, photo electronic, or photo optical system that affects interstate or foreign commerce.” This law makes it illegal for individuals to capture communication in transit or in storage. Although these laws were originally developed to secure voice communications, they now cover email and electronic communication.

Computer Fraud and Abuse Act of 1984: The Computer Fraud and Abuse Act (CFAA) of 1984 protects certain types of information that the government maintains as sensitive. The Act defines the term classified computer, and imposes punishment for unauthorized or misused access into one of these protected computers or systems. The Act also mandates fines and jail time for those who commit specific computer-related actions, such as trafficking in passwords or extortion by threatening a computer. In 1992, Congress amended the CFAA to include malicious code, which was not included in the original Act.

The Cyber Security Enhancement Act of 2002: This Act mandates that hackers who carry out certain computer crimes might now get life sentences in prison if the crime could result in another’s bodily harm or possible death. This means that if hackers disrupt a 911 system, they could spend the rest of their days in prison.

The Uniting and Strengthening America by Providing Appropriate Tools Required to Intercept and Obstruct Terrorism (USA PATRIOT) Act of 2001: Originally passed because of the World Trade Center attack on September 11, 2001, it strengthens computer crime laws and has been the subject of some controversy. This Act gives the U.S. government extreme latitude in pursuing criminals. The Act permits the U.S. government to monitor hackers without a warrant and perform sneak-and-peek searches.

The Federal Information Security Management Act (FISMA): This was signed into law in 2002 as part of the E-Government Act of 2002, replacing the Government Information Security Reform Act (GISRA). FISMA was enacted to address the information security requirements for government agencies other than those involved in national security. FISMA provides a statutory framework for securing government-owned and -operated IT infrastructures and assets.

Federal Sentencing Guidelines of 1991: Provides guidelines to judges so that sentences are handed down in a more uniform manner.

Economic Espionage Act of 1996: Defines strict penalties for those accused of espionage.

Compliance Regulations

Although it’s good to know what laws your company or client must abide by, ethical hackers should have some understanding of compliance regulations, too. In the United States, laws are passed by Congress. Regulations can be created by executive department and administrative agencies. The first step is to understand what regulations your company or client needs to comply with. Common ones include those shown in Table 1-2.

Table 1-2 Compliance Regulations and Frameworks

Name of Law/Framework |

Areas Addressed or Regulated |

Responsible Agency or Entity |

Sarbanes-Oxley (SOX) Act |

Corporate financial information |

Securities and Exchange Commission (SEC) |

Gramm-Leach-Bliley Act (GLBA) |

Consumer financial information |

Federal Trade Commission (FTC) |

Health Insurance Portability and Accountability (HIPAA) |

Established privacy and security regulations for the healthcare industry |

Department of Health and Human Services (HHS) |

ISO/IEC 27001:2013 |

Operates as a risk management standard and provides requirements for establishing, implementing, maintaining an information security management system |

International Organization for Standardization (ISO) |

Children’s Internet Protection Act (CIPA) |

Controls Internet access to pornography in schools and libraries |

Federal Trade Commission (FTC) |

Payment Card Industry Data Security Standard (PCI-DSS) |

Controls on credit card processors |

Payment Card Industry (PCI) |

Typically, you will want to use a structured approach such as the following to evaluate new regulations that may lead to compliance issues:

Step 1. Interpret the law or regulation and the way it applies to the organization.

Step 2. Identify the gaps in the compliance and determine where the organization stands regarding the mandate, law, or requirement.

Step 3. Devise a plan to close the gaps identified.

Step 4. Execute the plan to bring the organization into compliance.

Let’s look at one specific industry standard that CEH candidates should be aware of because it is global in nature and is a testable topic.

Payment Card Industry Data Security Standard (PCI-DSS)

PCI-DSS is a standard that most security professionals must understand because it applies in many different countries and to industries around the world. It is a proprietary information security standard that addresses credit card security. It applies to all entities that handle credit card data, such as merchants, processors, acquirers, and any other party that stores, processes, or transmits credit card data. PCI-DSS mandates a set of 12 high-level requirements that prescribe operational and technical controls to protect cardholder data. The requirements follow security best practices and are aligned across six goals:

Build and maintain a secure network that is PCI compliant

Protect cardholder data

Maintain a vulnerability management program

Implement strong access control measures

Regularly monitor and test networks

Maintain an information security policy

For companies that are found to be in noncompliance, the fines can range from $5,000 to $500,000 and are levied by banks and credit card institutions.

Summary

This chapter established that security is based on the CIA triad of confidentiality, integrity, and availability. The principles of the CIA triad must be applied to IT networks and their data. The data must be protected in storage and in transit.

Because the organization cannot provide complete protection for all of its assets, a system must be developed to rank risk and vulnerabilities. Organizations must seek to identify high-risk and high-impact events for protective mechanisms. Part of the job of an ethical hacker is to identify potential threats to these critical assets and test systems to see whether they are vulnerable to exploits.

The activities described are security tests. Ethical hackers can perform security tests from an unknown perspective (black box testing) or with all documentation and knowledge (white box testing). The type of approach to testing that is taken will depend on the time, funds, and objective of the security test. Organizations can have many aspects of their protective systems tested, such as physical security, phone systems, wireless access, insider access, and external hacking.

To perform these tests, ethical hackers need a variety of skills. They not only must be adept in the technical aspects of networks but also must understand policy and procedure. No single ethical hacker will understand all operating systems, networking protocols, or application software. That’s okay, though, because security tests typically are performed by teams of individuals, with each person bringing a unique skill or set of skills to the table.

So, even though god-like knowledge isn’t required, an ethical hacker does need to understand laws pertaining to hackers and hacking and understand that the most important part of the pretest activities is to obtain written authorization. No test should be performed without the written permission of the network or service. Following this simple rule will help you stay focused on the legitimate test objectives and avoid any activities or actions that might be seen as unethical/unlawful.