Enterprise Campus Network Virtualization

Virtualization in IT generally refers to the concept of having two or more instances of a system component or function such as operating system, network services, control plane, or applications. Typically, these instances are represented in a logical virtualized manner instead of being physical.

Virtualization can generally be classified into two primary models:

- Many to one: In this model, multiple physical resources appear as a single logical unit. The classical example of many-to-one virtualization is the switch clustering concept discussed earlier. Also, firewall clustering, and FHRP with a single virtual IP (VIP) that front ends a pair of physical upstream network nodes (switches or routers) can be considered as other examples of the many-to-one virtualization model.

- One to many: In this model, a single physical resource can appear as many logical units, such as virtualizing an x86 server, where the software (hypervisor) hosts multiple virtual machines (VMs) to run on the same physical server. The concept of network function virtualization (NFV) can also be considered as a one-to-many system virtualization model.

Drivers to Consider Network Virtualization

To meet the current expectations of business and IT leaders, a more responsive IT infrastructure is required. Therefore, network infrastructures need to move from the classical architecture (that is, based on providing basic interconnectivity between different siloed departments within the enterprise network) into a more flexible, resilient, and adaptive architecture that can support and accelerate business initiatives and remove inefficiencies. The IT and the network infrastructure will become like a service delivery business unit that can quickly adopt and deliver services. In other words, it will become a “business enabler.” This is why network virtualization is considered one of the primary principles that enables IT infrastructures to become more dynamic and responsive to the new and the rapidly changing requirements of today’s enterprises.

The following are the primary drivers of modern enterprise networks, which can motivate enterprise businesses to adopt the concept of network virtualization:

- Cost efficiency and design flexibility: Network virtualization provides a level of abstraction from the physical network infrastructure that can offer cost-effective network designs along with a higher degree of design flexibility, where multiple logical networks can be provisioned over one common physical infrastructure. This ultimately will lead to lower capex because of the reduction in device complexity and number of devices. Similarly, it will open lower because the operations team will have fewer devices to manage.

- Support a simplified and flexible integrated security: Network virtualization also promotes flexible security designs by allowing the use of separate security policies per logical or vitalized entity, where users’ groups and services can be logically separated.

- Design and operational simplicity: Network virtualization simplifies the design and provision of path and traffic isolation per application, group, service, and various other logical instances that require end-to-end path isolation.

This section covers the primary network virtualization technologies and techniques that you can use to serve different requirements by highlighting the pros and cons of each technology and design approach. This can help network designers (CCDE candidates) to select the best suitable design after identifying and evaluating the different design requirements (business and functional requirements). This section primarily focuses on network virtualization over the enterprise campus network. Chapter 4, “Enterprise Edge Architecture Design,” expands on this topic to cover network virtualization design options and considerations over the WAN.

Network Virtualization Design Elements

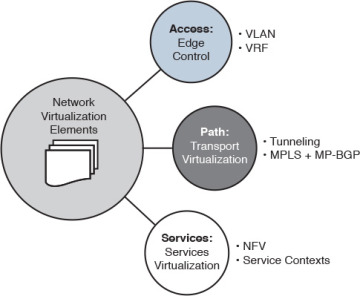

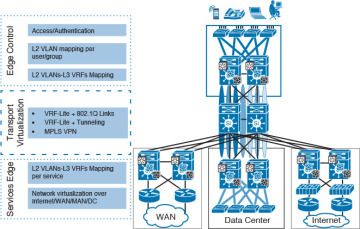

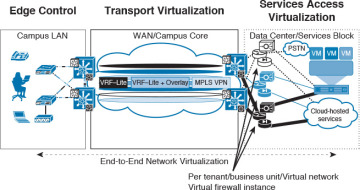

As illustrated in Figure 3-9, the main elements in an end-to end network virtualization design are as follows:

- Edge control: This element represents the network access point. Typically, it is a host or end-user access (wired, wireless, or virtual private network [VPN]) to the network where the identification (authentication) for physical to logical network mapping can occur. For example, a contracting employee might be assigned to VLAN X, whereas internal staff is assigned to VLAN Y.

- Transport virtualization: This element represents the transport path that will carry different virtualized networks over one common physical infrastructure, such as an overlay technology like a generic routing encapsulation (GRE) tunnel. The terms path isolation and path separation are commonly used to refer to transport virtualization. Therefore, these terms are used interchangeably throughout this book.

- Services virtualization: This element represents the extension of the network virtualization concept to the services edge, which can be shared services among different logically isolated groups, such as an Internet link or a file server located in the data center that must be accessed by only one logical group (business unit).

Figure 3-9 Network Virtualization Elements

Enterprise Network Virtualization Deployment Models

Now that you know the different elements that, individually or collectively, can be considered as the foundational elements to create network virtualization within the enterprise network architecture, this section covers how you can use these elements with different design techniques and approaches to deploy network virtualization across the enterprise campus. This section also compares these different design techniques and approaches.

Network virtualization can be categorized into the following three primary models, each of which has different techniques that can serve different requirements:

- Device virtualization

- Path isolation

- Services virtualization

Moreover, you can use the techniques of the different models individually to serve certain requirements or combined together to achieve one cohesive end-to-end network virtualization solution. Therefore, network designers must have a good understanding of the different techniques and approaches, along with their attributes, to select the most suitable virtualization technologies and design approach for delivering value to the business.

Device Virtualization

Also known as device partitioning, device virtualization represents the ability to virtualize the data plane, control plane, or both, in a certain network node, such as a switch or a router. Using device level virtualization by itself will help to achieve separation at Layer 2, Layer 3, or both, on a local device level. The following are the primary techniques used to achieve device level network virtualization:

- Virtual LAN (VLAN): VLAN is the most common Layer 2 network virtualization technique. It is used in every network where one single switch can be divided into multiple logical Layer 2 broadcast domains that are virtually separated from other VLANs. You can use VLANs at the network edge to place an endpoint into a certain virtual network. Each VLAN has its own MAC forwarding table and spanning-tree instance (Per-VLAN Spanning Tree [PVST]).

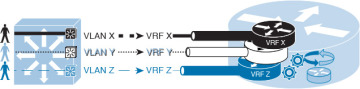

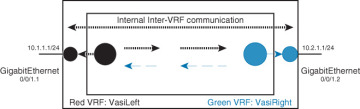

Virtual routing and forwarding (VRF): VRFs are conceptually similar to VLANs, but from a control plane and forwarding perspective on a Layer 3 device. VRFs can be combined with VLANs to provide a virtualized Layer 3 gateway service per VLAN. As illustrated in Figure 3-10, each VLAN over a 802.1Q trunk can be mapped to a different subinterface that is assigned to a unique VRF, where each VRF maintains its own forwarding and routing instance and potentially leverages different VRF-aware routing protocols (for example, OSPF or EIGRP instance per VRF).

Figure 3-10 Virtual Routing and Forwarding

Path Isolation

Path isolation refers to the concept of maintaining end-to-end logical path transport separation across the network. The end-to-end path separation can be achieved using the following main design approaches:

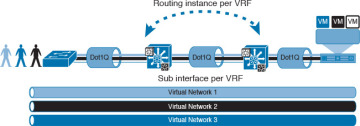

Hop by hop: This design approach, as illustrated in Figure 3-11, is based on deploying end-to-end (VLANs + 802.1Q trunk links + VRFs) per device in the traffic path. This design approach offers a simple and reliable path separation solution. However, for large-scale dynamic networks (large number of virtualized networks), it will be a complicated solution to manage. This complexity is associated with design scalability limitation.

Figure 3-11 Hop-by-Hop Path Virtualization

Multihop: This approach is based on using tunneling and other overlay technologies to provide end-to-end path isolation and carry the virtualized traffic across the network. The most common proven methods include the following:

Tunneling: Tunneling, such as GRE or multipoint GRE (mGRE) (dynamic multipoint VPN [DMVPN]), will eliminate the reliance on deploying end-to-end VRFs and 802.1Q trunks across the enterprise network, because the vitalized traffic will be carried over the tunnel. This method offers a higher level of scalability as compared to the previous option and with simpler operation to some extent. This design is ideally suitable for scenarios where only a part of the network needs to have path isolation across the network.

However, for large-scale networks with multiple logical groups or business units to be separated across the enterprise, the tunneling approach can add complexity to the design and operations. For example, if the design requires path isolation for a group of users across two “distribution blocks,” tunneling can be a good fit, combined with VRFs. However, mGRE can provide the same transport and path isolation goal for larger networks with lower design and operational complexities. (See the section “WAN Virtualization,” in Chapter 4 for a detailed comparison between the different path separation approaches over different types of tunneling mechanisms.)

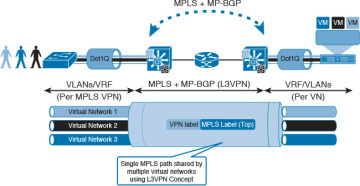

MPLS VPN: By converting the enterprise to be like a service provider type of network, where the core is Multiprotocol Label Switching (MPLS) enabled and the distribution layer switches to act as provider edge (PE) devices. As in service provider networks, each PE (distribution block) will exchange VPN routing over MP-BGP sessions, as shown in Figure 3-12. (The route reflector [RR] concept can be introduced, as well, to reduce the complexity of full-mesh MP-BGP peering sessions.)

Figure 3-12 MPLS VPN-Based Path Virtualization

Furthermore, L2VPN capabilities can be introduced in this architecture, such as Ethernet over MPLS (EoMPLS), to provide extended Layer 2 communications across different distribution blocks if required. With this design approach, the end-to-end virtualization and traffic separation can be simplified to a very large extent with a high degree of scalability. (All the MPLS design considerations and concepts covered in the Service Provider part—Chapter 5, “Service Provider Network Architecture Design,” and Chapter 6, “Service Provider MPLS VPN Services Design,”—in this book are applicable if this design model is adopted by the enterprise.)

Figure 3-13 illustrates a summary of the different enterprise campus network’s virtualization design techniques.

Figure 3-13 Enterprise Campus Network Virtualization Techniques

As mentioned earlier in this section, it is important for network designers to understand the differences between the various network virtualization techniques. Table 3-3 compares these different techniques in a summarized way from different design angles.

Table 3-3 Network Virtualization Techniques Comparison

End to End (VLAN + 802.1Q + VRF) |

VLANs + VRFs + GRE Tunnels |

VLANs + VRFs + mGRE Tunnels |

MPLS Core with MP-BGP |

|

Scalability |

Low |

Low |

Moderate |

High |

Operational complexity |

High |

Moderate |

Moderate |

Moderate to high |

Design flexibility |

Low |

Moderate |

Moderate |

High |

Architecture |

Per hop end-to-end virtualization |

P2P (multihop end-to-end virtualization) |

P2MP (multihop end-to-end virtualization) |

MPLS-L3VPN-based virtualization |

Operation staff routing expertise |

Basic |

Medium |

Medium |

Advanced |

Ideal for |

Limited NV scope in terms of size and complexity |

Interconnecting specific blocks with NV or as an interim solution |

Medium to large overlaid NV design |

Large to very large (global scale) end-to-end NV design |

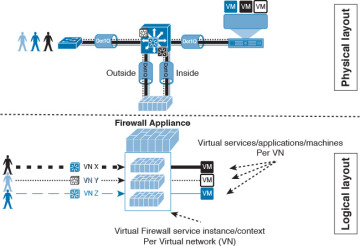

Service Virtualization

One of the main goals of virtualization is to separate services access into different logical groups, such as user groups or departments. However, in some scenarios, there may be a mix of these services in term of service access, in which some of these services must only be accessed by a certain group and others are to be shared among different groups, such as a file server in the data center or Internet access, as shown in Figure 3-14.

Figure 3-14 End-to-end Path and Services Virtualization

Therefore, in scenarios like this where service access has to be separated per virtual network or group, the concept of network virtualization must be extended to the services access edge, such as a server with multiple VMs or an Internet edge router with single or multiple Internet links.

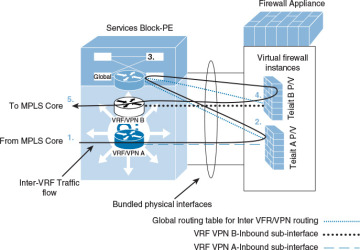

Figure 3-15 Firewall Virtual Instances

Furthermore, in multitenant network environments, multiple security contexts offer a flexible and cost-effective solution for enterprises (and for service providers). This approach enables network operators to partition a single pair of redundant firewalls or a single firewall cluster into multiple virtual firewall instances per business unit or tenant. Each tenant can then deploy and manage its own security polices and service access, which are virtually separated. This approach also allows controlled intertenant communication. For example, in a typical multitenant enterprise campus network environment with MPLS VPN (L3VPN) enabled at the core, traffic between different tenants (VPNs) is normally routed via a firewalling service for security and control (who can access what), as illustrated in Figure 3-16.

Figure 3-16 Intertenant Services Access Traffic Flow

Figure 3-17 zooms in on the firewall services contexts to show a more detailed view (logical/virtualized view) of the traffic flow between the different tenants/VPNs (A and B), where each tenant has its own virtual firewall service instance located at the services block (or at the data center) of the enterprise campus network.

Figure 3-17 Intertenant Services Access Traffic Flow with Virtual Firewall Instances

In addition, the following are the common techniques that facilitate accessing shared applications and network services in multitenant environments:

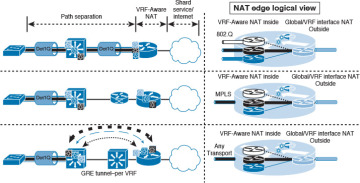

VRF-Aware Network Address Translation (NAT): One of the common requirements in today’s multitenant environments with network and service’ virtualization enabled, is to provide each virtual (tenant) network the ability to access certain services (shared services) either hosted on premise (such as at the emperies data center or services block) or hosted externally (in a public cloud). Also, providing Internet access to the different tenants (virtual) networks, is a common example of today’s multitenant network requirements. To maintain traffic separation between the different tenants (virtual networks) where private IP address overlapping is a common attribute in this type of environment, NAT is considered one of the common and cost-effective solutions to provide NAT per tenant without compromising path separation requirements between the different tenants’ networks (virtual networks). When NAT is combined with different virtual network instances (VRFs), it is commonly referred to as VRF-Aware NAT, as shown in Figure 3-18.

Figure 3-18 VRF-Aware NAT

Figure 3-19 VRF-aware Services Infrastructure

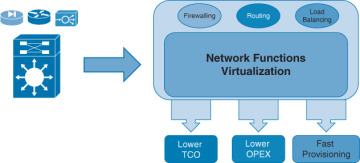

Network function virtualization (NFV): The concept of NFV is based on virtualizing network functions that typically require a dedicated physical node, appliances, or interfaces. In other words, NFV can potentially take any network function typically residing in purpose-built hardware and abstract it from that hardware. As depicted in Figure 3-20, this concept offers businesses several benefits, including the following:

- Reduce the total cost of ownership (TCO) by reducing the required number and diversity of specialized appliances

- Reduce operational cost (for example, less power and space)

- Offer a cost-effective capital investment

- Reduce the level of complexity of integration and network operations

- Reduce time to market for the business by offering the ability to enable specialized network services (Especially in multitenant where a separate network function/service per tenant can be provisioned faster)

Figure 3-20 NFV Benefits

This concept helps businesses to adopt and deploy new services quickly (faster time to market), and is consequently considered a business innovation enabler. This is simply because purpose-built hardware functionalities have now been virtualized, and it is a matter of service enablement rather than relying on new hardware (along with infrastructure integration complexities).